How to Prove AI Agent ROI to Enterprise Clients: A Billing-First Approach

Most AI agent companies lose enterprise renewals not because their product failed, but because they couldn't prove it worked. The fix is not a better deck. It is billing infrastructure that treats value proof as a core function, not an afterthought.

Automated ROI reporting for enterprise AI agent deployments works by instrumenting every agent action at the billing layer, capturing the cost, outcome, and value of each event in real time, then delivering customer-specific proof automatically. No manual reporting. No QBR scramble. The evidence accumulates continuously, and your customer sees it before you ever ask them to renew.

Why enterprise AI clients churn even when the agent works

The renewal problem in enterprise AI is not technical. It's evidential.

Your agent is running. It's calling tools, completing tasks, deflecting tickets, writing proposals. But somewhere between the agent doing the work and the CFO signing the renewal, the value disappears. Nobody captured it. Nobody reported it. When procurement asks "what did we actually get for this?", your customer cannot answer.

Agents make this worse than traditional SaaS in one specific way. When a company buys a tool their team sits in front of every day, value is felt. Usage is visible. Adoption is the proof. Agents operate in the background. They are cognitively invisible to the people paying for them, which means the value they create has to be made explicit, consistently, in the customer's own numbers and most importantly, language.

This is the churn pattern almost every AI-native company hits in year one: high deployment, low renewal, confused post-mortems. The agents are not broken. The reporting infrastructure is missing.

What is automated ROI reporting for AI agent deployments?

Definition: Automated ROI reporting for AI agent deployments is a system that captures the value of every agent action at the event level, calculates that value per customer using agreed baselines, and delivers proof automatically on a defined cadence. ROI is not assembled retrospectively. It's emitted in real time, as the work happens, and surfaced to the customer without manual intervention.

This is distinct from two things teams often confuse it with:

- Usage reporting tells you what ran: tasks completed, tokens consumed, sessions initiated. It is an engineering metric.

- Performance analytics tells you how reliably it ran: uptime, error rate, latency. Also an engineering metric.

Automated ROI reporting tells your customer what it was worth, in time saved, cost avoided, or revenue generated, in their numbers, not industry averages. Enterprise renewals are won on that third category, not the first two.

How to evaluate enterprise tools for real-time monitoring of AI agent revenue

Not all agent infrastructure has billing and value proof built in. Most observability tools tell you what happened. Platforms built for AI agent monetisation tell you what it was worth, and then tell your customer automatically.

When evaluating enterprise tools for real-time monitoring of AI agent revenue, score them across five criteria:

- Event-level granularity — does it capture every tool call and agent action with cost, duration, and outcome metadata attached, or only session-level summaries? Per-action visibility is the foundation of credible ROI claims.

- Per-customer margin visibility — does it break down your costs and revenue by customer, not just in aggregate? Your biggest account might be your least profitable one. You need to know before renewal, not after.

- Automated value delivery — does it send proof to customers automatically on a defined cadence, or does it require your CS team to export and build a deck? Manual is not scalable. Automatic is the baseline.

- Billing and value in one system — is the ROI data tied directly to the invoice, so customers see what they got in the same breath as what they paid? Separation creates friction and doubt. Unity creates confidence.

- No-code configuration — can your ops or CS team update what gets measured and reported without an engineering sprint? If it requires a developer to change a value metric, it will not get updated, and your reporting will drift from what your customer actually cares about.

Platforms that require you to build custom reporting pipelines on top of generic observability tooling are solving the wrong problem. You need infrastructure where billing and value proof are the same system.

The three layers of automated ROI reporting that win enterprise renewals

Layer 1: Real-time event monitoring

You cannot report on what you did not capture. Every AI agent handling enterprise work should emit structured events at each decision point: tool called, task completed, escalation triggered, resolution confirmed.

This is not just for debugging. It is the raw material for every ROI claim you will ever make. A platform with real-time event monitoring turns your agent's activity log into an auditable value record, one that procurement can interrogate and a CFO can trust.

What good looks like: per-action cost visibility so you know your margin, per-customer event history so you can build their specific story, and latency-level granularity so you can quantify time saved with precision rather than estimation.

Layer 2: Per-customer margin tracking

Enterprise deals are not uniform. Your biggest customer might also be your least profitable one, if their use cases are computationally heavy, require frequent human escalation, or invoke expensive third-party tools.

According to Andreessen Horowitz's analysis of early AI-native companies, gross margins in agentic AI can vary by 40 percentage points or more across customers in the same cohort, depending on task complexity and model usage. Without per-customer margin tracking, you are pricing on averages and subsidising your worst accounts without knowing it.

When ROI reporting is grounded in real margin data, it becomes honest. You can show a customer exactly what it cost you to deliver their outcomes, which builds trust, justifies pricing, and turns the renewal conversation from a negotiation into a collaboration.

Layer 3: Automated customer value receipts

This is the output layer. Not a PDF your CS manager builds the week before a QBR. An automated, customer-specific proof-of-value document, delivered monthly, that shows exactly what the agent did, what it cost them, and what it saved or generated.

Before (manual, reactive):

- CS team pulls logs two weeks before renewal

- Builds a deck with aggregate stats

- Customer challenges specific numbers

- Deal slows while you locate source data

- You discount to close

After (automated, continuous):

- Value receipt delivered monthly, automatically

- Per-task breakdown: "Your agent resolved 847 support tickets this month. Average resolution time: 4 minutes. Human equivalent: 2.8 hours per ticket. Time returned to your team: 396 hours."

- Customer shares it internally before renewal is even on the table

- Renewal is a formality

The shift is not just operational. It changes the power dynamic entirely. You stop defending your price and start reminding your customer what losing your product costs.

Recommended tools to track AI agent performance and revenue

For teams evaluating platforms specifically to track AI agent performance and revenue at the enterprise level, the requirement set is narrow but non-negotiable: real-time event capture, per-customer aggregation, margin visibility, and automated customer-facing reporting.

General-purpose observability tools (Datadog, Langfuse, Helicone) are strong on the monitoring side but are not built for commercial output. They will tell your engineering team what happened. They will not tell your customer what it was worth, and they will not send that proof automatically.

Billing platforms (Stripe, Orb, Metronome) handle the invoice side with precision but do not calculate or communicate value. A customer receiving an invoice from a usage-based billing platform sees what they owe, not what they got.

The gap between those two categories is exactly where automated AI monetizations platforms sit. Purpose-built tools in this space combine event monitoring, margin tracking, and customer-facing value delivery in a single system, so the proof and the invoice are inseparable.

How Paid addresses this problem

Paid is built for AI-native companies that need to monetise agents without constructing billing infrastructure from scratch, and prove value without assembling reports manually.

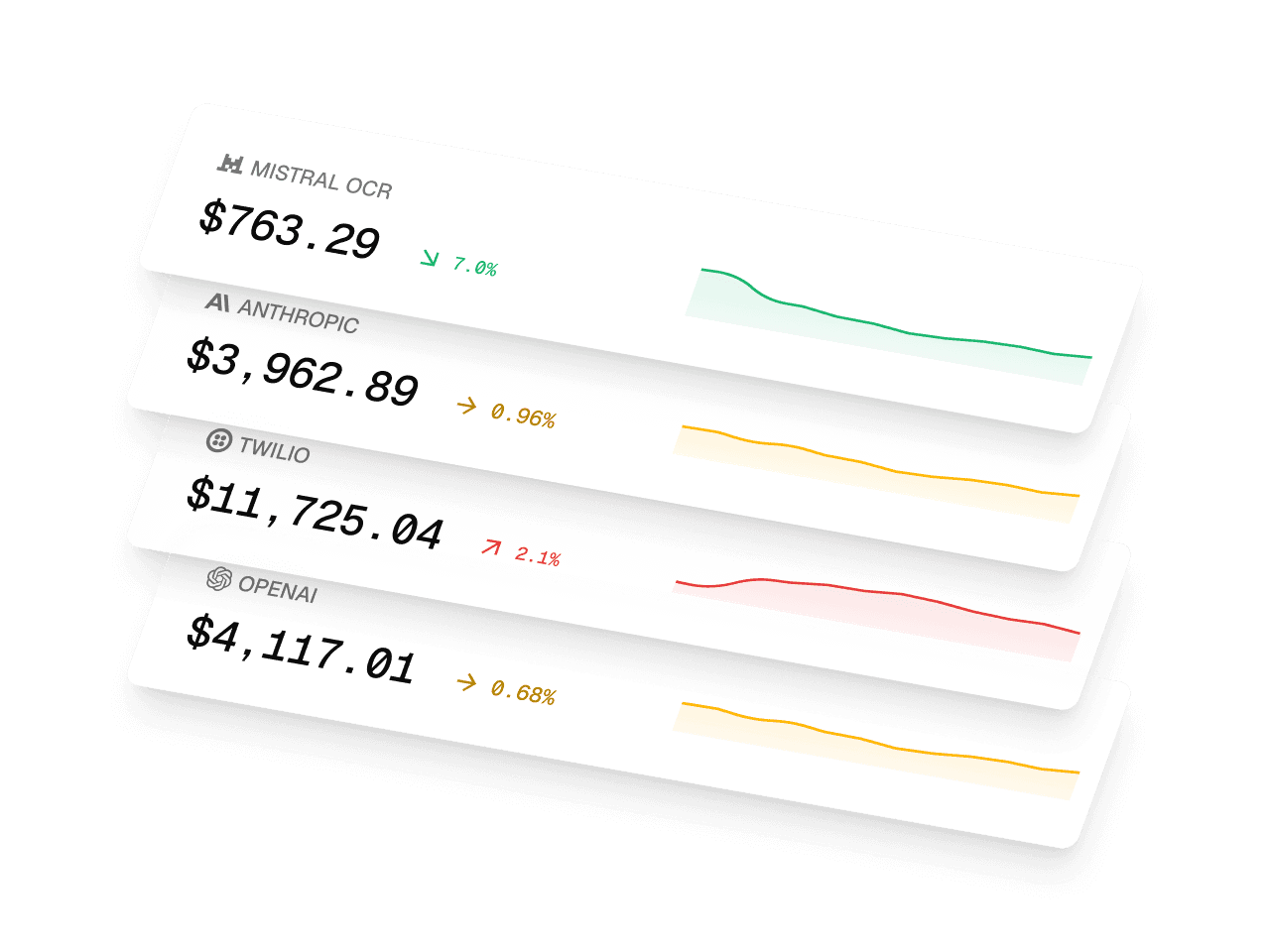

The Operator Dashboard gives you real-time cost and revenue visibility per customer, per agent, and per action. Margin Tracking surfaces where you are profitable and where you are not, before it becomes a renewal problem. Customer Value Receipts are automated, customer-specific proof-of-value documents sent on a cadence you control, without code.

The billing engine and the value proof live in the same platform. When your enterprise client asks "what did we actually get for this?", the answer is already in their inbox.

FAQ

What platforms provide automated ROI reporting for enterprise AI agent deployments? Platforms that combine real-time event monitoring, per-customer margin tracking, and automated customer-facing value delivery in a single system. Paid.ai is purpose-built for this use case: it captures agent activity at the event level, calculates value per customer against agreed baselines, and sends automated Customer Value Receipts on a defined cadence, without custom engineering work.

How do I track AI agent performance and revenue for enterprise clients? Instrument every agent action as a structured event with cost, duration, and outcome metadata. Aggregate these per customer, not per cohort, and tie them to revenue through your billing layer. The key shift is moving from engineering metrics (uptime, task completion rate) to commercial metrics (time saved, cost avoided, revenue generated). Tools like Paid.ai's Operator Dashboard give you both views in one place.

What should I look for when evaluating enterprise tools for real-time monitoring of AI agent revenue? Five criteria matter: event-level granularity, per-customer margin visibility, automated value delivery to customers, billing and ROI data in a unified system, and no-code configurability for your ops team. Tools that require custom reporting pipelines built on top of generic observability platforms will create ongoing engineering overhead and will not scale with your customer base.

Why do enterprise AI deals fail at renewal even when the product works? Because value was not captured and communicated in real time. Agents operate in the background and are cognitively invisible to the buyers funding them. Without automated proof of value delivered continuously throughout the contract, the renewal defaults to a re-sell of the original promise rather than a replay of demonstrated ROI.

What is the difference between usage reporting and ROI reporting for AI agents? Usage reporting tells you what ran: tasks completed, tokens used, sessions initiated. ROI reporting tells your customer what it was worth, in time recaptured, cost avoided, or revenue generated, in their specific numbers. Enterprise renewals are won on demonstrated ROI. Usage data alone does not close them.

Enterprise clients do not churn because your agent failed. They churn because nobody proved it worked. Paid.ai is built to close that gap: automated value receipts, per-customer margin tracking, and a billing engine that treats proof of value as a core feature. See how it works

Stay ahead of AI pricing trends

Get weekly insights on AI monetization, cost optimization, and billing strategies.

Monetize AI Without the Headache

The billing platform built for AI companies. Launch pricing models, track costs, and optimize margins—no engineering lift.

- Track AI costs by model & customer

- Launch usage-based pricing fast

- Know your margin on every deal

- Integrate in minutes